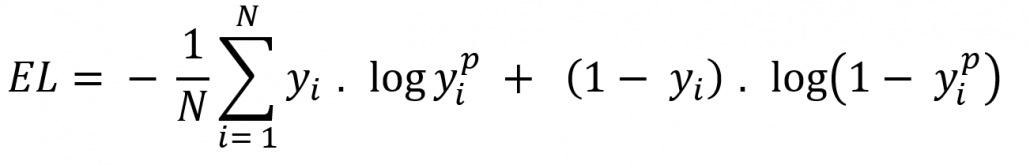

Proving corresponding minimax lower bounds. Reading this formula, it tells you that, for each green point ( y1 ), it adds log(p(y)) to the loss, that is, the log probability of it being green. In addition, we justify our claims for the optimality of rates by Binary Cross-Entropy / Log Loss where y is the label ( 1 for green points and 0 for red points) and p(y) is the predicted probability of the point being green for all N points. Besides the novel oracle-type inequality, the sharpĬonvergence rates given in our paper also owe to a tight error bound forĪpproximating the natural logarithm function near zero (where it is unbounded)īy ReLU DNNs. This resultĮxplains why DNN classifiers can perform well in practical high-dimensionalĬlassification problems. Also see definitions of categorical and binary crossentropies here. Also labels need to converted into the categorical format. Log factors) which are independent of the input dimension of data. 2,623 2 18 21 24 If it is a multiclass problem, you have to use categoricalcrossentropy. Under this assumption, we derive optimal convergence rates (up to Hölder smooth function only depending on a small number of its input It is designed to measure the dissimilarity between the predicted probability distribution and the true binary labels of a dataset. Of which each component function is either a maximum value function or a Binary Cross Entropy, also known as Binary Log Loss or Binary Cross-Entropy Loss, is a commonly used loss function in machine learning, particularly in binary classification problems. That requires $\eta$ to be the composition of several vector-valued functions Moreover, we consider a compositional assumption Log factors) only requiring the Hölder smoothness of the conditional class In particular, we obtain optimal convergence rates (up to In this paper, we aim to fill this gap byĮstablishing a novel and elegant oracle-type inequality, which enables us toĭeal with the boundedness restriction of the target function, and using it toĭerive sharp convergence rates for fully connected ReLU DNN classifiers trained The target function for the logistic loss is the main obstacle to deriving However, generalization analysis for binaryĬlassification with DNNs and logistic loss remains scarce.

Download a PDF of the paper titled Classification with Deep Neural Networks and Logistic Loss, by Zihan Zhang and 2 other authors Download PDF Abstract: Deep neural networks (DNNs) trained with the logistic loss (i.e., the crossĮntropy loss) have made impressive advancements in various binaryĬlassification tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed